本文最后更新于102 天前,其中的信息可能已经过时,如有错误请发送邮件到big_fw@foxmail.com

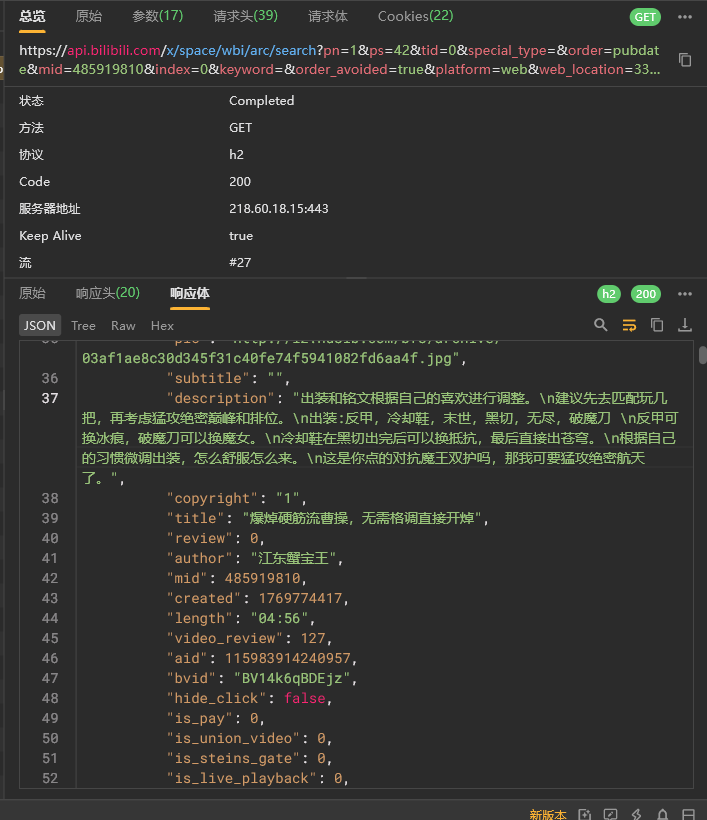

通过小黄鸟监控流量可以看到通过https://api.bilibili.com/x/space/wbi/arc/search的返回值可以按页得到up的所有视频的标题简介时长bvid等信息,从而得到视频url的列表以爬取视频。

尝试爬取改变pn第二页,发现被拦截,分析后发现时间戳与加密参数w_rid没有通过认证,在前端进行js逆向,得到算法:

_IDX = [46,47,18,2,53,8,23,32,15,50,10,31,58,3,45,35,27,43,5,49,33,9,42,19,29,28,14,39,12,38,41,13,37,48,7,16,24,55,40,61,26,17,0,1,60,51,30,4,22,25,54,21,56,59,6,63,57,62,11,36,20,34,44,52]

def build_mixin_key(img_url: str, sub_url: str) -> str:

o = img_url.split("/")[-1].split(".")[0]

a = sub_url.split("/")[-1].split(".")[0]

s = o + a

return ''.join(s[i] for i in _IDX)[:32]

def make_wbi_signed_params(params: dict, img_url: str, sub_url: str) -> dict:

p = {k: v for k, v in params.items() if k != "w_rid"}

p["wts"] = str(int(time.time()))

pairs = []

for k in sorted(p.keys()):

v = p[k]

if v is None:

v = ""

v = re.sub(r"[!'()*]", "", str(v))

pairs.append(f"{quote(str(k), safe='-_.~')}={quote(str(v), safe='-_.~')}")

m = "&".join(pairs)

w_rid = hashlib.md5((m + build_mixin_key(img_url, sub_url)).encode("utf-8")).hexdigest()

p["w_rid"] = w_rid

return p加密算法中的img_url与sub_url每天在https://api.bilibili.com/x/web-interface/nav更新,进行爬取即可,结合时间戳和其他已知参数(如页数)可以得到w_rid,然后通过获取的vlist中的bvid对视频进行爬取,得到分离开的视频文件与音频文件,并通过ffmpeg进行拼接,得到视频。

为了便于将视频进行管理,使用MySQL对视频保存地址进行储存:

for v in vlist:

if num<=get_num(author_id) and mmm>=numm:

title = v["title"]

author= v["author"]

author_id= v["mid"]

length= v["length"]

video_review= v["video_review"]

comment= v["comment"]

play= v["play"]

address= "c/act/%s%s.mp4 "%(author,num)

sql = """

INSERT INTO `id_%s` (title, content, author_id, author,length, video_review, play,address)

VALUES (%s, %s, %s, %s, %s, %s, %s,%s)

"""

cursor.execute(sql, (author_id,title, comment, author_id, author,length, video_review, play,address))

conn.commit()对于已经下载过的作者,将检查已经下载的视频数量与其作者视频数量是否一致,若不一致,则删除最后一个视频(防止上次下载时的打断使得视频文件损坏),并从视频队列末尾继续下载。

def get_num(author_id):

VIDEO_SPACE = f'https://space.bilibili.com/{author_id}/upload/video'

space_html = requests.get(VIDEO_SPACE, headers=headers, cookies=cookies, verify=False).text

tree = etree.HTML(space_html)

ee = tree.xpath("//meta[@name='spm_prefix']/@content")[0]

params = {}

params["mid"]=author_id

params["web_location"]=ee

json=requests.get(NAVNUM_URL, headers=headers, cookies=cookies, verify=False,params=params,).json()

num=json["data"]["video"]

return num

def main_spider(author_id,num=1):

from load import password,db_name

conn= pymysql.connect(

host='localhost',

user='root',

password= f"{password}",

database= f"{db_name}",

charset='utf8mb4',

cursorclass=pymysql.cursors.DictCursor

)

cursor = conn.cursor()

mmm=1

numm=num源码可参考:special-potato/bilispider at main · PlutoNebula/special-potato